The failure each signal commits

A neural score says high attention for an ad that flopped because the joke was stale. A linguistic score says high persuasion density for an ad that bored people. A historical benchmark says this will work for a creative format that died last quarter. A cultural relevance score says on-trend for an ad that was poorly made.

Every single-signal prediction has a shape of failure. The shapes are different, which is the point. Anyone who tries to predict behavior with one signal family will hit a ceiling that the other families would have caught. Anyone who picks up all four crosses it.

Neural, linguistic, cultural, historical. Anyone predicting with one family hits a ceiling. Anyone integrating all four crosses it.

The four signals, defined

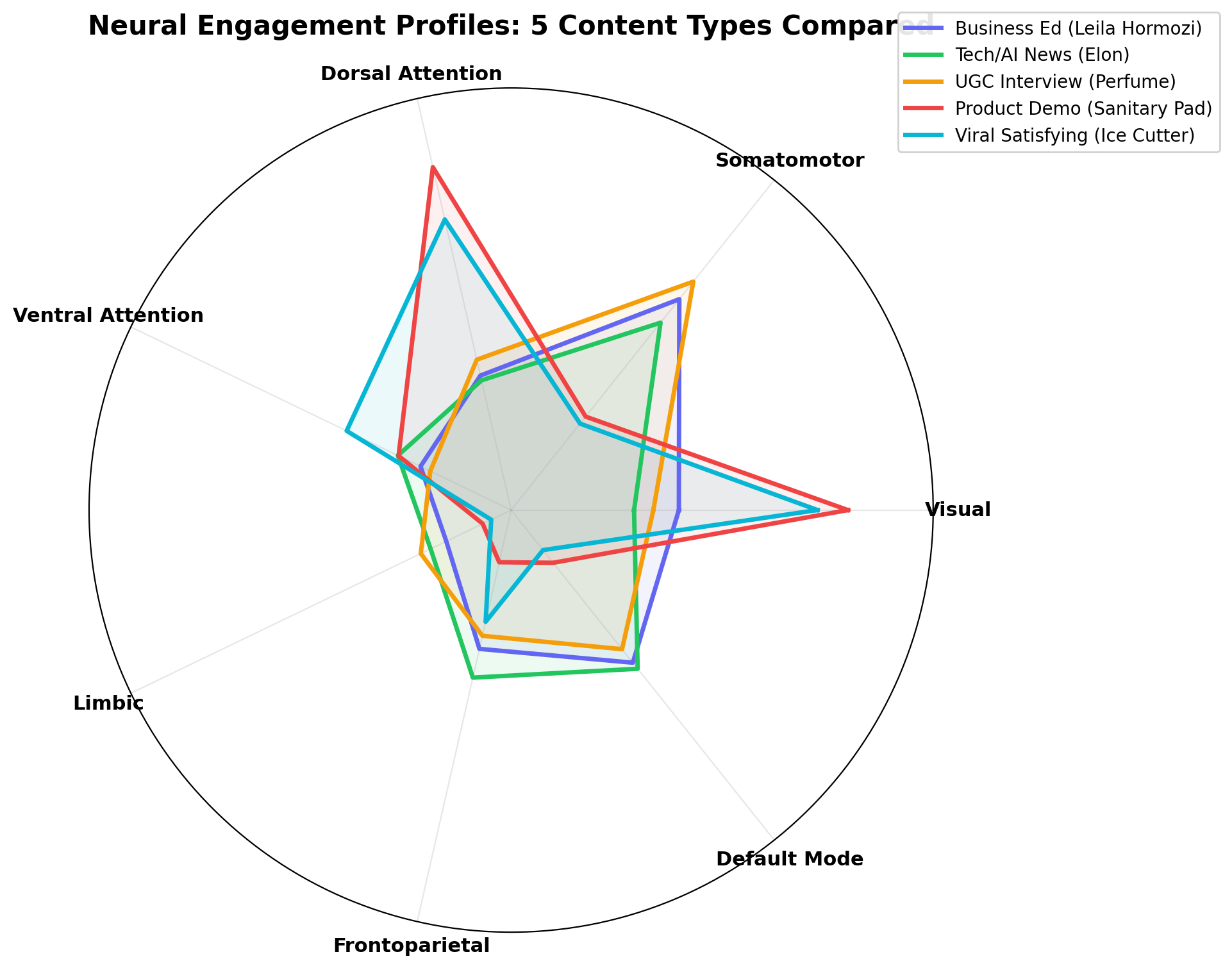

Neural. Predicted cortical response to the stimulus from forward encoding models. What does a typical human brain do when it meets this video, this image, this word sequence. Tools: TRIBE v2[1], MindEye and MindEye2[2], Huth Lab semantic maps[3], Algonauts benchmarks[4].

Linguistic. Psycholinguistic features of the content itself. Arousal and valence, persuasion markers, concreteness, emotional arc, style entropy. Tools: LIWC, VADER, narrative arc extraction, LLM embedding similarity.

Cultural. Distance from the current zeitgeist. Not history, not biology. Live conversation proximity. Tools: Google Trends, platform-specific virality data, memetic salience indexes, language drift detection.

Historical. How have structurally similar pieces performed when they were in market. Match via ad library archives, creative intelligence taxonomies, and paid social benchmarks conditioned on audience and objective.

The diagram

The four signals sort onto two axes. The vertical axis runs from biological substrate at the top to cognitive content at the bottom. The horizontal axis runs from individual sample on the left to population scale on the right. Neural sits top-left. Linguistic sits bottom-left. Cultural sits bottom-right. Historical sits bottom-right, further toward population-over-time.

Each quadrant has a ceiling that the others' strengths happen to cover. That symmetry is why fusion works. It is not a design choice. It is a property of the signal families themselves.

Why fusion is harder than it sounds

Baltrusaitis, Ahuja, and Morency's 2018 TPAMI review[5] catalogs three ways to fuse multimodal signals. Early fusion concatenates raw features and loses family-specific structure. Late fusion averages the scores each family produces and loses cross-family interaction. Hybrid fusion with cross-attention layers is state of the art.

The engineering reality is less elegant. Calibration across signal families is the load-bearing problem. Neural signals have one distribution and one noise profile. Historical data has another. Cultural features drift. Linguistic features do not. Making the combined predictor well-calibrated under distribution shift is where most of the work actually lives. Anyone who treats fusion as "ensemble the scores" has not yet built a production system.

Precedent in adjacent fields

Multimodal fusion is how every serious prediction system got good. CLIP[6] fused text and image. Flamingo[7] fused vision and language across shots. ImageBind[8] fused six modalities including audio, depth, and thermal. AlphaFold[9] fused evolutionary, structural, and chemical priors.

Medicine repeated the pattern. Single-modality CNNs in dermatology and chest X-rays plateaued. The Acosta et al. 2022 review in Nature Medicine[10] reports multimodal biomedical AI lifting AUC by five to fifteen points over the best single-modality baselines. Not always. Consistently enough to matter.

IBM Watson DeepQA[11] was an explicit signal-integration architecture. So is Watson's successor, every modern search ranker, every serious fraud detection system. The pattern is not incidental. It is structural.

What fusion buys a predictive product

Consider a single video creative. The neural signal says 0.72 predicted engagement: above average cortical response, driven mainly by temporoparietal junction and precuneus. The linguistic signal says 0.58: decent persuasion density, weak narrative arc. The cultural signal says 0.81: the central metaphor maps onto a meme that is spiking this week. The historical signal says 0.40: the nearest matches in the ad library underperformed after week three.

A late-fusion ensemble averages those to 0.63. A hybrid-fusion predictor that has learned how the families interact says something closer to "high cultural + high neural can rescue weak historical, but only for the first two weeks of flight." That kind of statement is impossible to produce from any single family. It is cheap to produce if you have all four and a model that can reason across them.

What this framework does not claim

It is not a theory of human behavior. It does not say that neural, linguistic, cultural, and historical are the only signals that exist, or that they carve cognition at its joints. It is a decomposition of the prediction problem, chosen because the four families happen to be instrumentable at commodity prices in 2026 and happen to have complementary failure modes.

We prefer pragmatic usefulness over metaphysical truth. A framework earns its keep by the calibration curves it produces, not by the elegance of its axes.

The category implication

The field is in the middle of a decision it has not yet admitted. One path scales a single signal, usually simulated panels, and calls it prediction. The other reorganizes around fusion and rebuilds the category's infrastructure.

We have written separately about why the first path is a repeat of the last twenty years of neuromarketing (see the scale manifesto), why we chose to publish our calibration studies rather than asserting accuracy (see why we publish), and what legacy neuromarketing actually delivered (see what is neuromarketing in 2026). This framework is the spine those pieces hang off.

If the field organizes around fusion, the ceiling moves. If it organizes around one signal, we all plateau together. OpenAffect builds on all four.

References

- 1Meta AI. TRIBE v2: A brain predictive foundation model. 2026.

- 2Scotti et al. MindEye and MindEye2. NeurIPS 2023, ICML 2024.

- 3Tang, LeBel, Jain, Huth. Semantic reconstruction from non-invasive brain recordings. Nature Neuroscience 2023.

- 4Algonauts Project 2025. MIT CSAIL.

- 5Baltrusaitis, Ahuja, Morency. Multimodal machine learning: a survey and taxonomy. TPAMI 2018.

- 6Radford et al. Learning transferable visual models from natural language supervision (CLIP). 2021.

- 7Alayrac et al. Flamingo: a visual language model for few-shot learning. 2022.

- 8Girdhar et al. ImageBind: one embedding space to bind them all. 2023.

- 9Jumper et al. Highly accurate protein structure prediction with AlphaFold. Nature 2021.

- 10Acosta, Falcone, Rajpurkar, Topol. Multimodal biomedical AI. Nature Medicine 2022.

- 11Ferrucci et al. Building Watson: an overview of the DeepQA project. AI Magazine 2010.

- 12Berns and Moore. A neural predictor of cultural popularity. Journal of Consumer Psychology 2012.