Why this review exists

Forward neural encoding has moved from an academic neuroscience subfield to an applied prediction tool in the last three years. The combination of TRIBE v2 (Meta FAIR), MindEye and MindEye2, the Huth Lab semantic atlas work, and the Algonauts benchmark constitutes a genuinely new technology stack for predicting cortical response to naturalistic stimuli.

Any company doing serious behavioral prediction in 2026 should know this stack cold. This is an honest reviewer's map: what each system does, where it is strong, where it is weak, and what it does not do. It is written so a researcher can evaluate a vendor claim without having to read the underlying preprints.

Commercial vendors make neural claims with no reference to the open stack. Reviewers need a map. This is one.

Definitions

- Forward encoding model. Maps stimulus features to predicted brain response. Inverse of decoding.

- Decoding model. Maps brain response back to stimulus or cognitive state. Opposite direction.

- Naturalistic stimuli. Video, audio, text, mixed. Contrast with controlled single-feature laboratory stimuli.

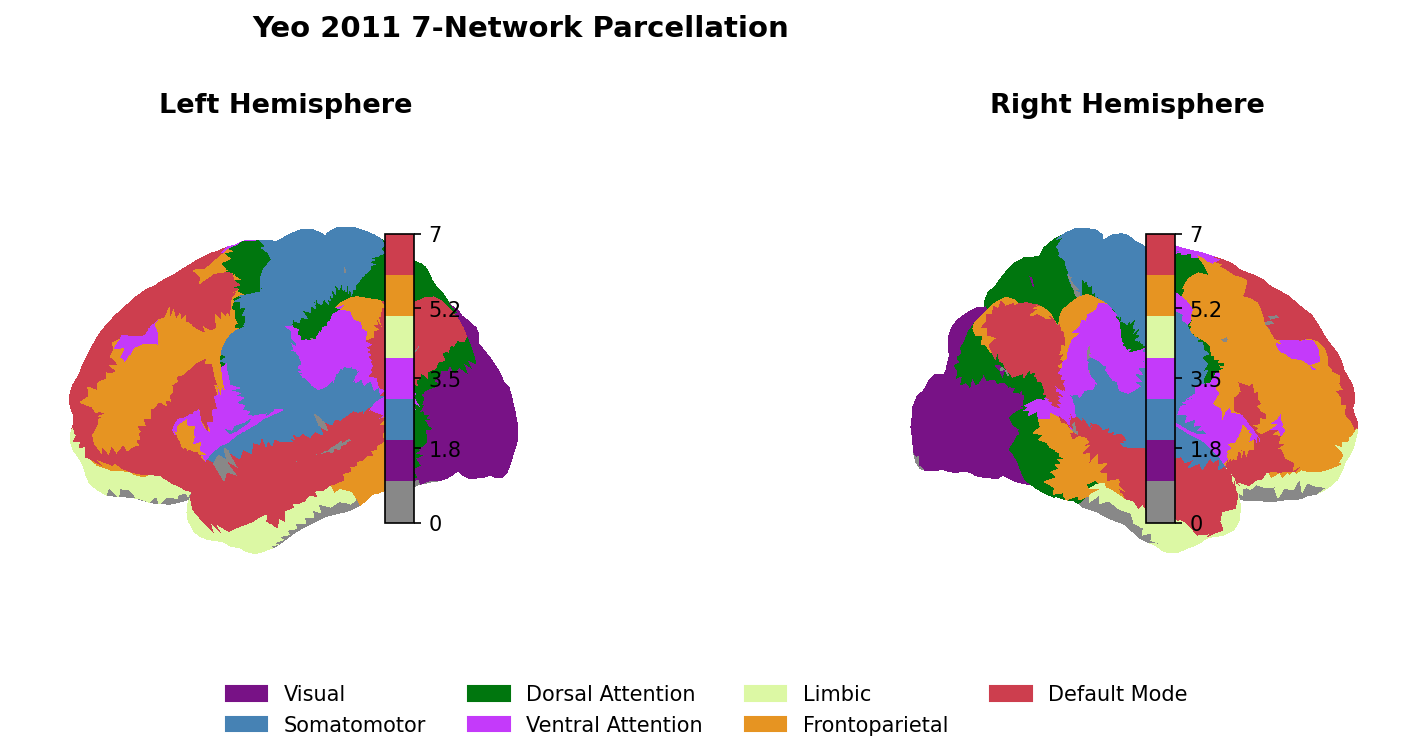

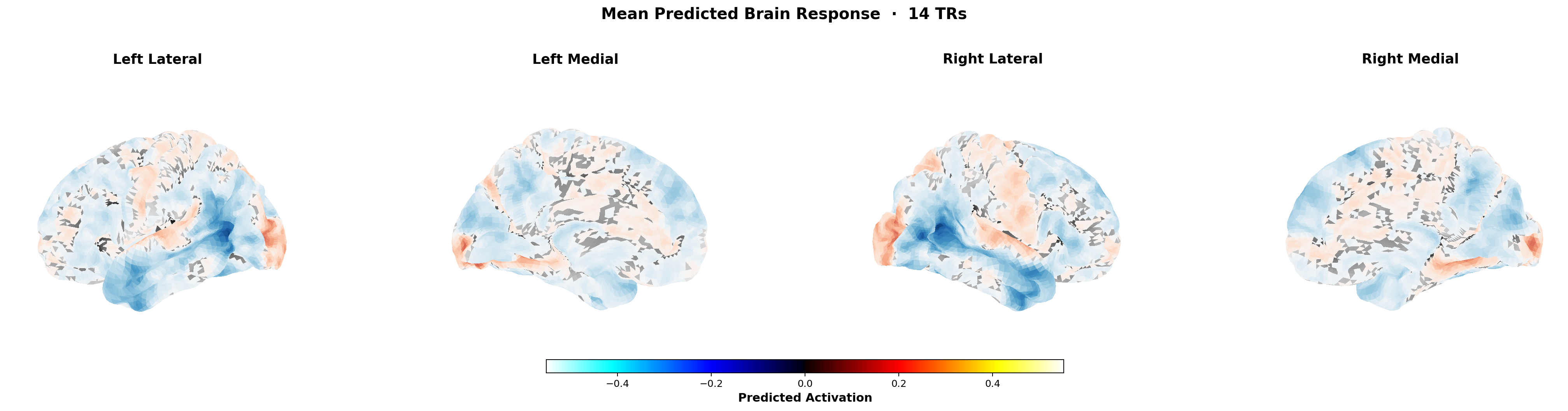

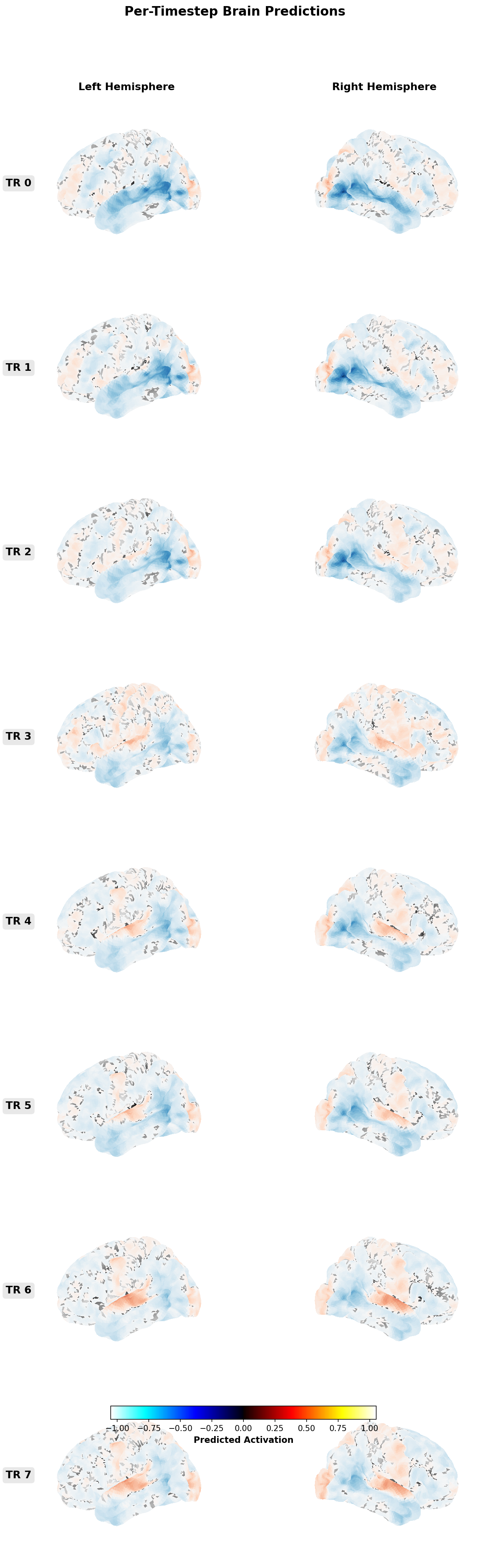

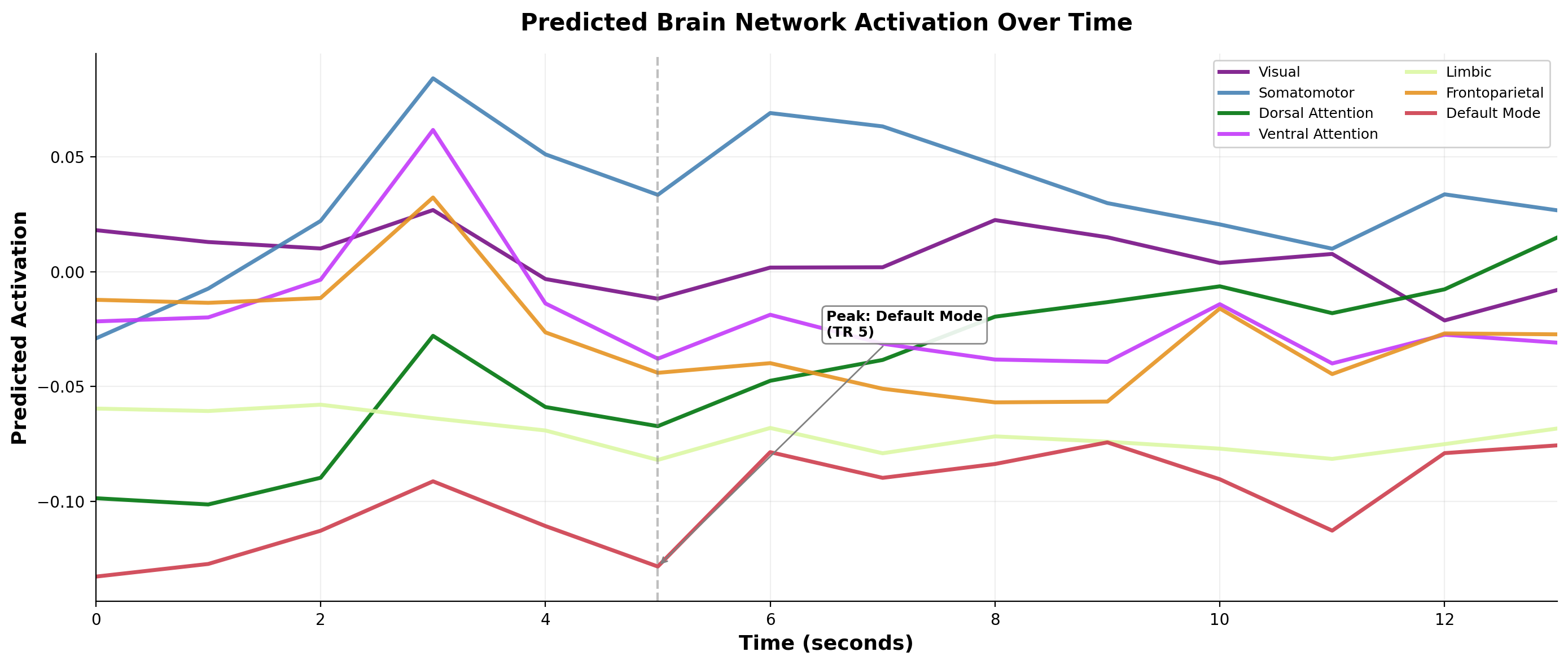

- Voxel-level prediction. Spatial unit of fMRI. A well-trained encoder outputs predicted BOLD response per voxel per time step.

- Inter-subject correlation (ISC). Measures consistency of neural response across viewers. Used heavily in naturalistic paradigms to filter noise.

TRIBE v2 (Meta FAIR)

TRIBE v2 is transformer-based forward encoding trained across subjects and stimuli. Architecture: multi-modal backbone fusing DINOv2 visual, wav2vec 2.0 audio, and Llama-family language features; cross-attention fusion; subject-specific readout heads on a shared latent. Training: over 1,000 hours of fMRI from approximately 720 subjects, drawn from Algonauts 2023, Natural Scenes Dataset, and movie-watching paradigms (CNeuroMod Friends, MOMA, StudyForrest).

Strengths. Cross-subject generalization (zero-shot to held-out subjects). Open weights. Benchmark performance on Algonauts. Full explainer: TRIBE v2 explained.

Limitations. Predicts BOLD, not behavior. Requires careful feature extraction. fMRI temporal resolution (seconds, not milliseconds) constrains what you can ask. Research-only license in current release.

MindEye and MindEye2 (MedARC, Princeton, Stability AI)

MindEye[1] and MindEye2[2] are primarily decoding systems: they reconstruct viewed images from fMRI. They are instructive in reverse because they establish an aligned representation space that is informative about encoding as well.

Training data. Natural Scenes Dataset (Allen et al. 2022 Nature Neuroscience[3]). Eight subjects viewing over 70,000 unique scenes across 30-40 scanning sessions each. Large, high-SNR, foundational.

Strengths. High-fidelity image reconstruction. Cross-subject alignment techniques (MindEye2 trains a shared subject-agnostic space and adapts per subject).

Limitations. Static images, not video. Decoding is not forecasting. Useful as an alignment reference for encoding models; not directly a content-prediction tool.

Huth Lab semantic atlas (UT Austin)

Alexander Huth's lab built encoding models trained on story-listening fMRI that map semantic representations across cortex (Huth et al. Nature 2016[4]). The more recent Tang et al. Nature Neuroscience 2023[5] extended this to language generation reconstruction: a semantic decoder that turns fMRI into text.

Strengths. Elegant language-level mapping. Reproducible across studies. Directly useful for linguistic signal components of content prediction.

Limitations. Language-specific. Requires long training sessions per subject. Per-subject calibration overhead.

Algonauts Project (2019–2024)

The Algonauts Project[6] is a public benchmark for encoding models. The 2023 release featured a whole-brain video encoding challenge. It is the reference for comparing architectures: 3D ResNet, VideoMAE, SlowFast, multimodal transformers.

Strengths. Standardized evaluation. Open data. Encourages reproducible progress.

Limitations. Benchmark fit is not the same as production reliability. Out-of-distribution generalization is still open. A model that wins Algonauts 2025 may or may not generalize to 2026 advertising creative.

Natural Scenes Dataset and the training data landscape

NSD (Allen et al. 2022[3]) is public, large, high-SNR. Foundational for image encoding research. The limitation for content prediction work is that NSD is static images, not video, which reduces ecological validity for ad-format work without careful extrapolation.

Broader data landscape: CNeuroMod hosts movie-watching fMRI for a small number of subjects at extremely high depth. OpenNeuro[7] aggregates most shareable neuroimaging datasets. The field is data-rich in some directions (images, short clips), data-poor in others (long-form ads, cross-cultural content, longitudinal naturalistic viewing). Understanding where the training distribution does and does not match your use case is the most important diligence step.

Comparison matrix

| Model | Input | Output | Training data | Status | Useful |

|---|---|---|---|---|---|

| TRIBE v2 | Video + audio + text | BOLD per voxel | Algonauts, NSD, movie-watching (1000h) | Open weights | Yes |

| MindEye / MindEye2 | fMRI | Reconstructed images | NSD (8 subjects) | Open weights | Reverse direction, useful for alignment |

| Huth semantic atlas | Listening-audio or reading-text | Semantic activation maps | Huth lab story-listening corpus | Academic | With caveats |

| Algonauts submissions | Video clips | Voxel-wise activation (benchmark) | CNeuroMod Friends | Varies | Benchmark only |

What brain encoding does not do

Forward encoding does not replace outcome calibration. Predicting BOLD is not predicting sales, engagement, or retention (see the calibration page). Every encoding model must be paired with a separate predictor from neural response to behavioral outcome, and that predictor has its own error bars.

Cross-subject generalization has improved dramatically but is not solved. Application to new populations, languages, or platforms requires caution and usually fine-tuning.

Open data and open weights accelerate the field. Closed commercial encoders that do not publish architecture, training data, or benchmark results have no credible way to claim advantage over the open stack. The right skeptical posture toward any such vendor is "show the calibration, or assume the encoder is not better than the open baseline."

What OpenAffect uses and why

We build on the open stack. TRIBE-class encoders for the neural signal family. Huth Lab semantic work informs our linguistic modeling. We evaluate against Algonauts and NSD benchmarks as sanity checks. Our commercial model is the fusion layer above the encoder, with calibration against public ad performance (see calibration), not a replacement for the open models.

The durable commercial position is infrastructure that integrates with open research rather than competing against it. The category that tries to own proprietary encoders and refuses to publish benchmarks will lose to the one that builds on top of open science.

References

- 1Scotti et al. MindEye: reconstructing visual experiences from brain activity. NeurIPS 2023.

- 2MindEye2: shared-subject models enable fMRI-to-image with one hour of data. ICML 2024.

- 3Allen et al. A massive 7T fMRI dataset (NSD). Nat Neuroscience 2022.

- 4Huth et al. Natural speech reveals the semantic maps that tile human cerebral cortex. Nature 2016.

- 5Tang, LeBel, Jain, Huth. Semantic reconstruction from non-invasive brain recordings. Nat Neuroscience 2023.

- 6Algonauts Project.

- 7OpenNeuro.

- 8Jain and Huth. Incorporating context into language encoding models for fMRI. 2018.

- 9Caucheteux, Gramfort, King. Evidence of a predictive coding hierarchy. Nat Comms 2022.

- 10Schrimpf et al. The neural architecture of language. PNAS 2021.

- 11Yamins et al. Performance-optimized hierarchical models predict neural responses. PNAS 2014.

- 12Meta FAIR research page.