The four tiers

Pre-publication ad testing in 2026 has four tiers. Platform-native tools are free and shallow. Survey-based traditional is slow and robust. Neuro and biometric is moderate cost and moderate predictive power. AI-based forward prediction is cheap, fast, and new. You do not pick one. You stack them by decision risk.

| Tier | Approach | Cost | Speed | Strength |

|---|---|---|---|---|

| 1 | Platform-native | Free | Minutes | Format validation, in-platform A/B |

| 2 | Survey-based | $1K–$75K | Hours to days | Emotional and attention metrics |

| 3 | Neuro / biometric | $300–$15K per asset | Days to weeks | Subconscious response detail |

| 4 | AI forward prediction | $0–$5K per batch | Minutes | Pre-production scoring at scale |

The bigger the media budget, the more tiers you use. Below ten thousand, Tier 1 is enough. Above a hundred thousand, you stack three.

Tier 1: Platform-native, free

Meta Creative Hub previews format compliance and safe-area rendering. It does not score creative. Advantage+ Creative applies automated variations (crop, copy, brightness) and reports in-platform lift versus the original. Meta Split Testing uses Bayesian analysis. The industry rule of thumb: 95 percent confidence plus 1,000 conversions per cell before calling a winner.

Use this tier when the spend is under ten thousand dollars, the variation is narrow (copy, thumbnail, duration), and the decision is mostly delivery-adjacent. Above that threshold, Tier 1 alone leaves too much variance on the table.

Tier 2: Survey-based pre-test

System1 (LSE:SYS1, CIO Orlando Wood) scores ads on a 1.0 to 5.9 Star Rating plus a Spike Rating via FaceTrace across roughly 150 respondents. IPA data ties 4-5 star ads to three to five times larger market share gains[1]. Wood's Lemon and Look Out IPA monographs[2] formalize the right-brain creative features that correlate with System1 scores.

Nielsen BASES Innovation Testing historically ran $30K to $75K per concept. Kantar Link AI (launched 2021) scores short-form video in roughly fifteen minutes at $1K to $2.5K per ad versus $10K+ for full Link, with reported correlation of 0.75 to 0.85 between AI and full Link[3].

The evidence base for why emotion and attention metrics matter is Binet and Field's The Long and the Short of It[4]: emotional broad-reach campaigns deliver roughly two times the business effects over long horizons. Karen Nelson-Field's Attention Economy[5] validated attention-seconds as a short-term sales-lift predictor against Meta data.

Tier 3: Neuro and biometric

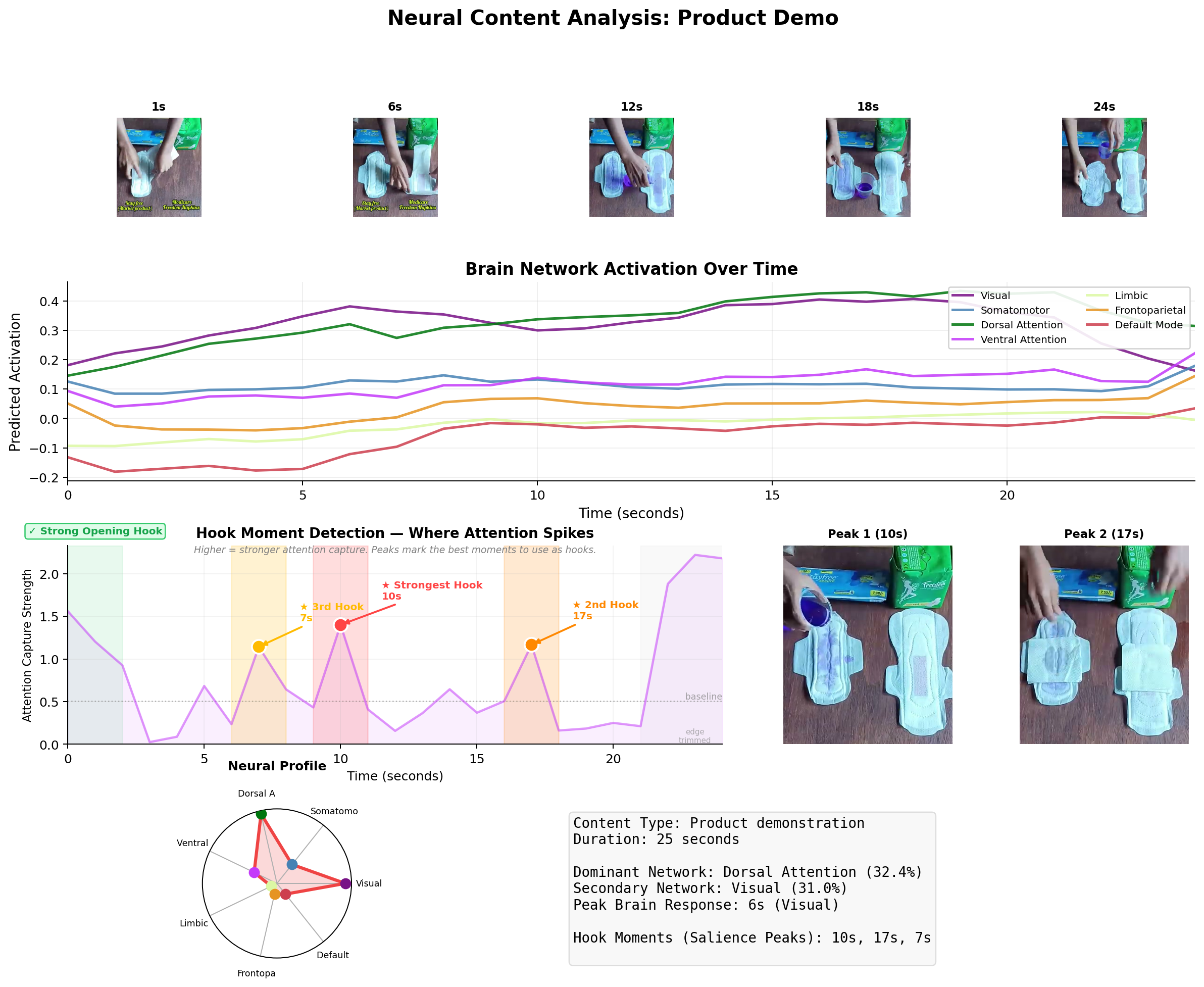

Neurons Inc Predict (Ramsoy, Copenhagen) is SaaS at $300 to $1,000 per asset, trained on EEG plus eye-tracking data, claiming roughly 90 percent agreement with lab neuro. Smart Eye, which acquired Affectiva in 2021 for $73.5M, runs facial-coding via Media Analytics. Tobii Sticky (acquired from Sticky.ai) offers webcam-based eye tracking.

The honest caveat on this tier is Varan et al. in JAR 2015[6]. The ARF Neuro 1 and Neuro 2 vendor comparison found opaque constructs and weak inter-vendor agreement. Different vendors scored the same ads differently. Ask for calibration studies against field outcomes before writing a contract.

Tier 4: AI forward prediction

This tier is new in the last two years. Predictive models trained on labeled creative performance (Memorable, now inside Reddit; DAIVID; CreativeX; VidMob) score unreleased creative against historical distributions. Forward brain encoding (TRIBE v2 (see TRIBE v2 explained)) adds neural-response prediction without scanning.

Swayable runs pre-launch RCTs on digital panels. Clients include Unilever, Patagonia, and the Biden 2020 campaign. Upwave (formerly Survata) runs Pre-Flight brand-lift pre-tests. Synthetic audience products like Evidenza (B2B), Yabble Virtual Audiences, and Zappi Amplify Ads are in the $3K range and work for directional reads. The honest limits of synthetic panels are covered separately (see synthetic respondents vs focus groups).

The foundation for this tier is Venkatraman et al. in JMR 2015[7]. Ventral striatum fMRI signal added an incremental R² of 0.10 to 0.14 on market-level sales beyond traditional measures across thirty TV ads. That is a real effect size, and it is what AI forward prediction is trying to reproduce at commodity cost.

Decision framework

Match tiers to budget.

- Under $10K. Tier 1 only. Don't over-test an ad that will not pay for its own instrumentation.

- $10K to $100K. Tier 1 plus Tier 2 or Tier 4. Pick Tier 2 for brand. Pick Tier 4 for iteration speed.

- $100K and up. Stack Tier 2 plus Tier 3 plus Tier 4. If the spend is in hundreds of thousands, the cost of a Tier 3 run is negligible compared to the cost of launching a bad creative at scale.

- Long-horizon brand work. Tier 2 emotional plus attention is non-negotiable. Binet-Field numbers are the reason.

What a blended workflow looks like

A concrete seven-day pre-launch sequence for a creative team running above $100K spend:

- Day 1. Tier 4 predictive score on cut 1. Read it as a shape, not a number.

- Day 2. Rework low-scoring sections. Tier 4 again on cut 2.

- Day 3. Tier 2 survey validation on the top three variants. System1 or Kantar Link AI.

- Days 4–5. Tier 1 live split test on cheap inventory. Read Learning Phase graduation (see the creative testing benchmarks).

- Days 6–7. Scale the winner. Rotate in Tier 4 scored variants weekly to stay ahead of fatigue.

What comes next

The next generation of pre-testing is brain encoding models sitting on top of labeled creative intelligence libraries, producing pre-publication scores that integrate neural, linguistic, cultural, and historical signal (see the scoring framework) (see the four signals).

Each tier in this piece will still exist in 2028. The tier that grows fastest is Tier 4. The question is whether vendors in that tier will publish their calibration.

References

- 1Orlando Wood. Lemon: How the Advertising Brain Turned Sour. IPA 2019.

- 2Orlando Wood. Look Out: A Stark Warning for Marketers. IPA 2021.

- 3Kantar Link AI.

- 4Binet and Field. The Long and the Short of It. IPA 2013.

- 5Nelson-Field. The Attention Economy. Amplified Intelligence 2020.

- 6Varan et al. How reliable are neuromarketers' measures? JAR 2015.

- 7Venkatraman et al. Predicting advertising success beyond traditional measures. JMR 2015.

- 8Meta Split Testing.

- 9System1 Test Your Ad.

- 10Neurons Inc Predict.

- 11Swayable.